Ispis umjetna koža koturaljke muški ženski vanjski klizanje sport cipele 4 kotača patines zapatos con patines europa veličine 35-45 kupi online | Izlaz < www.cateringkodane.com.hr

DIVNA Supermarket - U ponudi smo opet dobili Soy Luna koturaljke. Da napomenemo da su ovo orginalne Soy Luna koturaljke. | Facebook

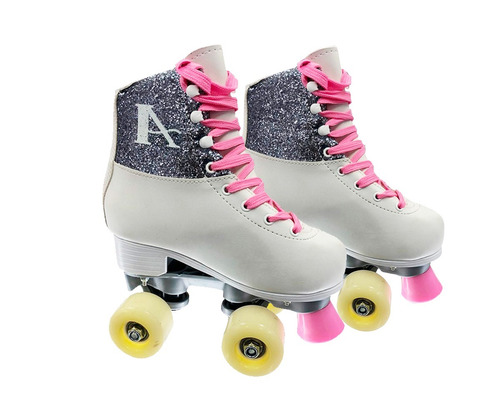

Srebro Ženski Muški 2 Smještajna koturaljke Od Umjetne kože Obuća Za Klizanje Patins Klizna Atv Tenisice Za Početnike 2 Broj Odraslih 4 Kotača Rasprodaja / Sport i zabava < www.apartmani-hasic.com.hr